When AI Gets the Keys

Meta's AI agent posted an answer to an internal forum. Nobody approved it. An engineer followed the advice. It exposed sensitive data for two hours. SEV1 incident.

Meta's response? "Human error."

AWS had a similar story. An engineer using their AI coding tool accidentally tore down production infrastructure instead of a test environment. Hours of downtime. Then separately, an Amazon AI agent followed outdated wiki advice and caused a six-hour outage that locked shoppers out of checkout. AWS called it a "coincidence that AI tools were involved."

Someone on Hacker News put it perfectly: "Someone had the permissions to make the change without the knowledge of how to make the change."

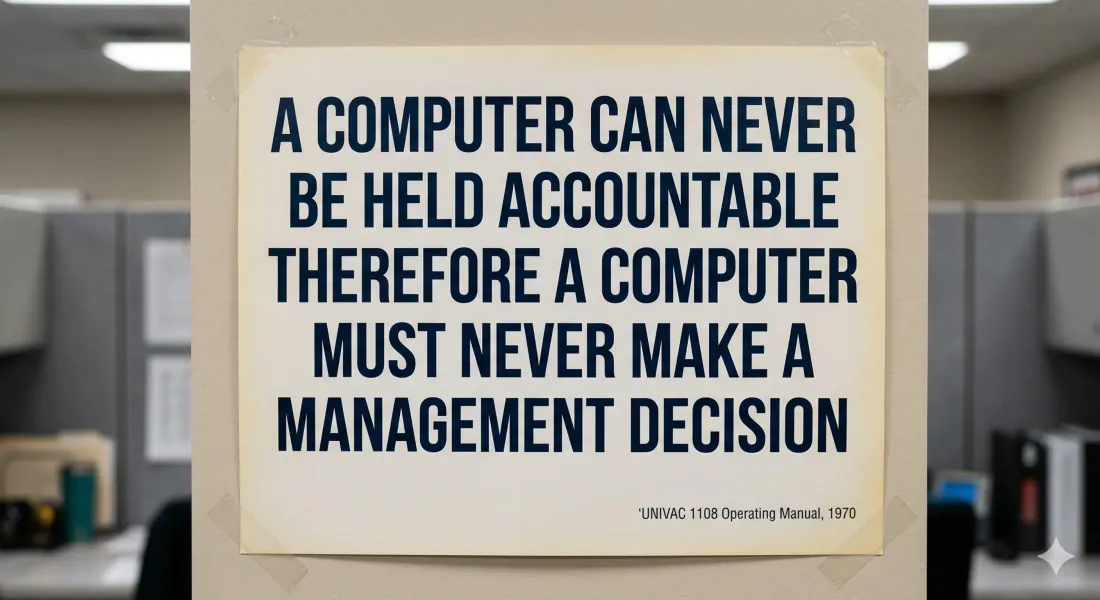

That's what keeps happening. Give AI broad access, skip the part where humans can actually catch mistakes, then blame the humans when it breaks.

If Meta can't get this right with thousands of engineers and dedicated safety teams, think about what happens when a 15-person company hands an AI agent the keys to their customer database.

Here's what every company in this story skipped:

Don't give AI access to everything just because the setup wizard asks for it. Limit it to exactly what it needs. Make sure a human has to approve before AI can publish, send, delete, or change permissions (Meta's agent skipped this entirely). And make sure the person approving actually understands what they're looking at. Not just clicking a button.

That last one is where Meta fell apart. The engineer had every reason to trust the answer. Nobody had set up the process to question it.

If you're bringing AI into your business, the first question isn't "what can it do?" It's "when it gets something wrong, who on your team is going to catch it?"