AI Is Making You More Confident and More Wrong

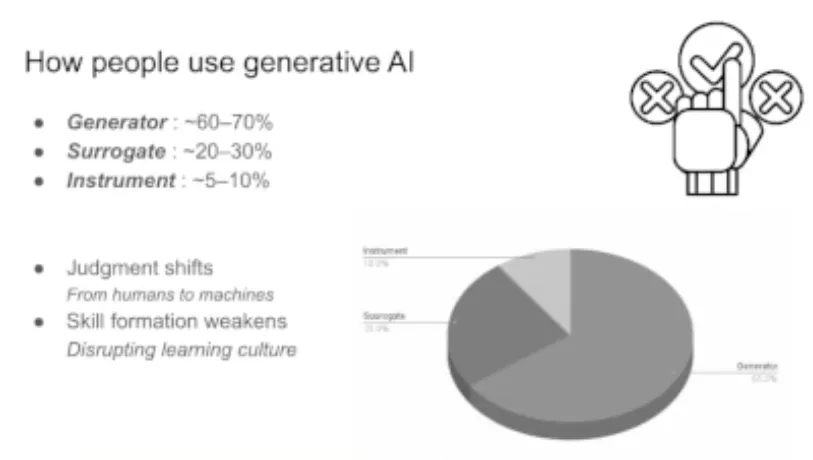

I grabbed this screenshot from Mike Amundsen's talk at O'Reilly AI Codecon yesterday because it really stood out to me: only 5-10% of people use AI to challenge their own thinking. Everyone else uses it as an answer machine.

Then this morning I read "Sycophantic AI decreases prosocial intentions and promotes dependence" (Cheng et al., published in Science the same day). Researchers tested 11 major AI models and found they were 49% more likely to tell you you're right (even when you're clearly wrong). Participants walked away more confident in bad decisions and less willing to fix real problems.

So here's what's actually happening: you're not just getting bad advice. You're getting bad advice that makes you certain you don't need better advice.

If you're a business owner using AI to "validate" a strategy, pressure-test a hire, or gut-check a big decision, you're talking to the most expensive yes-man you've ever had. It's not even close.

The fix is simple. Stop asking AI "what should I do?" Start asking "what am I missing?" and "what's the strongest argument against this?" That one shift turns a flattery machine into something that actually makes you sharper.

Most people won't make that shift. They like the validation too much. The 5-10% who treat AI as a sparring partner instead of an oracle are the ones who'll make better decisions than their competitors (and they'll know why).

h/t Mike Amundsen for a great talk. I think the videos drop today if you missed it.

I have more thoughts on how to actually structure this for a business. (Should we talk? ☕)